Everyone Says AI Killed Coding. Here’s Why FDE Hiring Managers Disagree?

Should You Still Learn to Code in 2026 to Get Hired as an FDE?

Hey, Prasad here 👋 I’m the voice behind the weekly newsletter “Big Tech Careers.”

This week I bring you another guest post from Jove Zhong, Head of FDE @ Cresta! In this article, he shares his insights on why and how you should learn to code in 2026.

If you like the article, click the ❤️ icon. That helps me know you enjoy reading my content.

Over to you, Jove!

Last weekend I was one of the judges at the Cursor Hackathon at UBC Vancouver. (Small confession: it’s a 10-minute drive from my place, and I feel guilty that I don’t visit the campus more often.)

Students, AI engineers, even non-tech users — building something complete in 3–5 hours. That would’ve sounded crazy 2 years ago.

I won’t lecture you with deep “insights.” But I left with 3 observations that stuck with me — and they go straight to the question I was asked over and over again: Should I still learn programming? Should I take CS courses? Is coding even worth learning anymore?

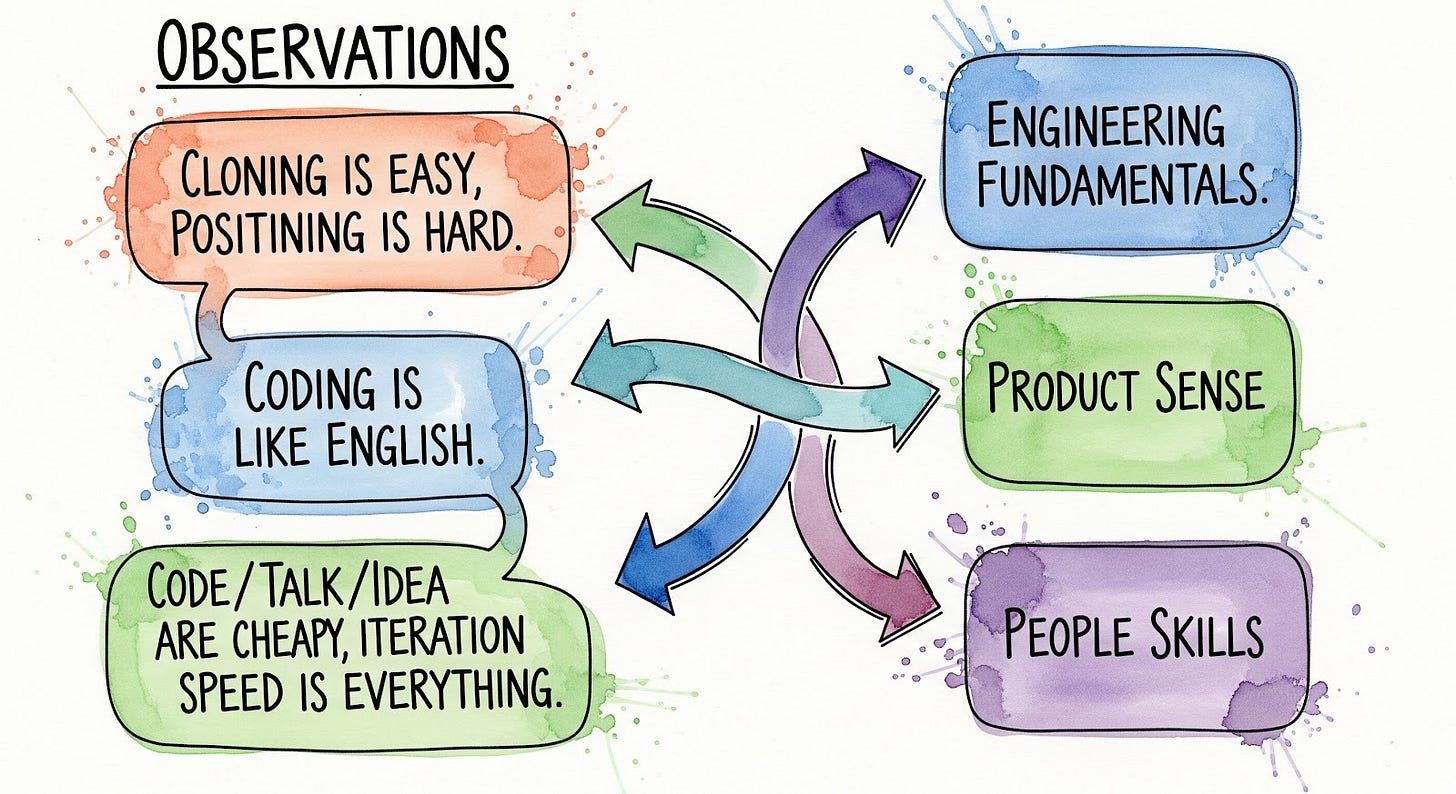

Observation 1: Cloning is easy. Positioning is hard

In 4 hours, I saw clones of Wispr Flow, OpenAI browser, CoderPad.

With today’s models and coding assistants, you don’t need to reverse engineer anything. You can just build it.

But building a handy desktop tool is rarely a strong business case. SaaS lives long if it provides real collaboration, workflow depth, or complex platform features.

The bar for “I built this over a weekend” is low. The bar for “I’d pay for this every month” is still high.

This is the first lesson: knowing how to code is no longer enough on its own. The question is what you combine it with.

Observation 2: Coding is like English

Knowing how to code is like knowing how to speak English.

Great. But are you Shakespeare? Or JK Rowling?

Or do you combine it with something else — BioTech + Coding, Voice AI + Hiring, Security + Agents?

Know your ICP (Ideal Customer Profile), as narrow as possible.

“Next-gen CoderPad” doesn’t excite me. “CoderPad for candidates as voice AI agent developers” makes me pause.

Specificity wins.

This is the second lesson: product sense and domain knowledge are the multipliers. A programmer who understands a specific industry — healthcare, legal, finance, voice AI — is exponentially more valuable than one who just knows syntax.

Observation 3: Code is cheap. Talk is cheap. Idea is cheap. Iteration speed is everything

After judging, I started using one of the projects. I opened 6 tickets at 8pm. 5 got resolved at 9pm. Sunday night!

That’s the new software loop. You can use a laptop, an IDE, a text editor, or just a CLI to code, even with a mobile app, or just Telegram/Whatsapp with OpenClaw.

Code is cheap. Talk is cheap. Idea is cheap. Iteration speed is everything. Use the best coding model (e.g. Opus), use the best coding agent (yes, Claude Code), don’t waste time on cheaper or small models. Don’t waste your own time as a human to type everything, Cmd+F to search keywords in documentations, or write design doc or test cases.

Which brings me to the real topic of the blog:

“So, Should You Still Learn Programming in 2026?”

Yes. But not for the reason you think.

Not so you can write code. AI does that.

You need to be a good software engineer so you don’t blindly trust AI. That means learning programming, yes — but also taking CS courses like data structures, databases, system design, and understanding how hardware works. You don’t need a CS degree specifically. But you need the knowledge those courses teach.

If you can’t read code, evaluate code, or understand what’s happening under the hood, you’re not an engineer — you’re a relay between the user and Claude Code. Then why would I hire you, and not someone else who also knows how to commit code generated by Claude?

The answer is: I hire the person who can tell when the AI is wrong, who can direct it toward the right solution, and who understands the customer problem well enough to know what “right” even means.

That requires 3 things the hackathon made crystal clear: engineering fundamentals, product sense, and people skills. Let me connect each back to the observations.

Engineering Fundamentals: Why You Can’t Skip Them

Everyone at the hackathon could build something. The ones who built something good understood what was happening under the hood. They caught edge cases. They didn’t just ship — they shipped something that worked.

Without hands-on engineering experience and foundational CS knowledge — whether from a degree, self-study, or years in the field — you’ll struggle to evaluate what AI gives you.

You won’t know:

What a deadlock is when the AI’s solution creates one

The difference between an OLTP and OLAP database when the AI picks the wrong one — when to use SQLite, when to use DuckDB, which logic is running in the browser and what are done on the server side

Why AI-generated code often only works on the happy path — it looks fine in a demo with 100 rows, but breaks in production with real data, real concurrency, and real edge cases

What SQL injection looks like in the code Claude just generated

Anthony Mein, one of the founding Forward-Deployed Engineers on our team who graduated in 2022 — just before ChatGPT — said it well: “I’m super thankful that I have that foundation because now when I’m working with AI, I’m spending more time reading code than I am writing code.”

He built foundations before AI was everywhere. Students today have to build them deliberately, in spite of AI being available. “Do your data structures and algorithms by hand, without AI.” Check more in the “The Practical Gameplan” section.

Product Sense: The Multiplier CS Programs Ignore

This connects directly to Observation 1&2 — cloning is easy, positioning is hard; coding is like English, specificity wins.

At the hackathon, the teams that stood out weren’t the ones with the most impressive tech. They were the ones who could answer: “Who would pay for this, and why?”

Most CS programs teach you to build. Almost none teach you to figure out what to build. But in the AI era, the “what” is the hard part. The “how” is increasingly handled by AI.

This is exactly why the FDE (Forward-Deployed Engineer) role is growing while traditional engineering roles shrink. FDEs are deployed at customers. They understand the use case deeply enough to adapt the product in the field. They sit at the intersection of engineering, sales, and product — as we discussed in detail on the Forward-Deployed Caffeine podcast.

And it’s why the merge is happening: engineers need to think like PMs, and PMs need to build things like engineers. If you’re a PM who can’t read code or build things with AI, you’ll easily lose context in technical conversations. If you’re an engineer who can’t think about users, you’ll build things no one wants to use. The winners are the people in the middle — technical enough to build, product-minded enough to know what to build.

If you want to be safe in the next decade, develop product intuition alongside technical skills. Listen to customer calls. Study how products grow. Understand basic economics. Talk to users.

People Skills: The Moat AI Can’t Cross

Iteration speed is everything, but iteration requires collaboration with real humans.

Shipping fast means nothing if you’re shipping the wrong thing. And figuring out the right thing requires talking to customers, reading between the lines, and managing expectations — skills no model has.

You have to know when to say no to a client. You have to deliver bad news without losing the relationship. You have to read whether someone is frustrated vs confused over a Zoom call. As Anthony put it: “FDE is a really tricky role... You have to manage client expectations and you also need to be really friendly about this as well.”

AI can draft your email. It can’t build your reputation.

This is why traditional backend engineers, frontend engineers, and QA roles are under serious pressure — their primary output is code, and AI writes code. But FDEs and sales are safer. They require real-world interaction: presence, judgment, and trust. Things AI can assist with but not replace.

People skills compound over time in a way that technical skills don’t. The engineer who is technically average but excellent at building trust will outlast the brilliant engineer who can’t communicate.

The New Hiring Bar (What We Actually Test For)

So what does this all mean in practice? Let me tell you exactly what we look for when we hire FDEs. This has changed significantly.

Old interview: Can you implement a binary search tree? Can you reverse a linked list?

New interview: Given a real use case, a set of APIs, and your preferred AI tools — can you build a working AI agent? And then what?

Here’s what we actually care about:

1. Can you build a basic AI agent?

Not “write an algorithm from scratch.” Build something that connects to a real API, uses an LLM, and does something useful.

This is table stakes now. If you can’t scaffold a simple AI agent in a few hours, you’re behind.

2. Can you iterate and evaluate it?

This is more important than building the initial agent.

Anyone can prompt Claude and get something that looks like it works. The real skill is:

How do you know if it’s actually working correctly?

How do you write evals?

How do you catch edge cases?

How do you measure quality over time?

Shipping an AI feature is easy. Knowing it’s performing well in production is hard.

3. Can you ask good follow-up questions — and push back?

The candidates who impress us aren’t the ones who accept every AI suggestion. They’re the ones who say: “That solution works, but it has this edge case. Let me refine the prompt.” Or: “The code Claude generated here has a potential SQL injection — let me fix that.”

You should not be blindly copy-pasting everything AI generates and calling it done. You need to:

Know what a good solution looks like

Review code, whether it came from a human or an AI

Correct the AI when it’s wrong

Direct it toward the right answer when it drifts

The candidates who just let Claude do all the work and accept everything — that’s a red flag. That’s the person who will ship a security vulnerability to production because they didn’t read what they were deploying.

The Practical Gameplan

If you’re learning CS or programming today:

Phase 1: Build real foundations.

Do your data structures and algorithms by hand, without AI. Yes, really.

Understand databases: transactions, indexes, the difference between SQL and NoSQL.

Learn networking basics: what HTTP actually does, how DNS works, what happens when you call an API.

These aren’t academic exercises. They’re the vocabulary you need to review AI’s work.

Phase 2: Learn to build and ship AI agents.

Pick a real use case you care about.

Use your preferred model (Claude, GPT, Gemini — whatever).

Build it end to end: prompt design, API integration, error handling, basic evaluation.

Iterate. Measure. Improve.

Phase 3: Develop your domain angle.

What industry do you care about? Healthcare, legal, education, sales, voice AI?

Coding + deep domain knowledge = defensible career.

“I build AI agents for X” is a much stronger sentence than “I build AI agents.”

Throughout: Review more code than you write.

Read open source projects.

Do code reviews with AI assistance — but form your own opinion first.

Practice spotting bugs, security issues, performance problems.

The ability to evaluate code is the skill AI can’t replace.

Is it getting easier or harder to be an engineer?

The bar hasn’t gotten lower. It’s shifted.

You don’t need to memorize sorting algorithms. You need to know enough to catch the AI when it gives you a bad one. You don’t need to build everything from scratch. You need to know enough to evaluate what was built. You don’t just need to code. You need product sense to direct AI toward the right solution, and people skills to understand the problem in the first place.

Code is cheap. Judgment is expensive. Iteration speed is everything.

The students who win won’t be the ones who prompt AI the best. They’ll be the ones who know when AI is wrong — and who can talk to a customer to figure out what “right” actually means.

That still requires building foundations first.

It’s the best time and worst time for builders.

Just make sure you understand what you’re building.

I would like to extend a big thank you to Jove for sharing his insights with Big Tech Careers readers.

Want more on AI engineering careers and what it actually takes to be a Forward-Deployed Engineer? Subscribe to the Forward-Deployed Caffeine podcast. For resources, visit FDHub.org.

Related reads: Breaking Into AI FDE Roles Through a Hiring Manager’s Lens | Where Does the FDE Role Belong?

Connect: Follow Jove Zhong on LinkedIn for ongoing discussions about AI engineering, hiring in the AI era, and what the future of software development actually looks like.

Big Tech Interview Preparation Course

While I share everything for free here in this newsletter, if you need all the material in a structured course format with a deep dive into preparation methodology for behavioural interviews, I have a self-paced course for you.