Skills You Need for AI FDE Roles at OpenAI, Anthropic, NVIDIA, Databricks & Salesforce

What top AI companies really expect from forward‑deployed engineers

Hey, Prasad here 👋 I’m the voice behind the weekly newsletter “Big Tech Careers.”

In this week’s article, I share the skills you need to get hired as FDE in top AI companies.

If you like the article, click the ❤️ icon. That helps me know you enjoy reading my content.

This is a follow‑up to my earlier article on transitioning from Developer/Architect to AI FDE roles. In that piece, I argued that the knowledge gap is smaller than you think if you already work as an Solutions Architect (SA) or Software Engineer (SWE).

In this article, I go one level deeper: I analyze what AI Forward Deployed Engineer–style roles actually look like at five of the most sought‑after companies—OpenAI, Anthropic, NVIDIA, Databricks, and Salesforce—and distill the skills you really need to break into them.

You’ll notice a pattern: the core skills are the same, but each company has its own weightage for each of these skills that they look for in candidates.

The Common DNA: What All AI FDE Roles Expect

Before we pick apart company‑specific nuances, it’s worth summarizing the shared core of these roles.

Across these five companies, an AI FDE is expected to:

Embed with customers or strategic internal teams, not just sit in a central platform group.

Own end‑to‑end delivery: discovery, design, implementation, deployment, and post‑go‑live iteration.

Work directly with modern LLM tooling: RAG systems, agents, vector stores, observability, and cost controls.

Translate messy business requirements into durable technical systems and then back into business value.

Operate under ambiguity, time pressure, and partial information.

As you can see, these are combination of SA, SWE and AI skills including both technical and behavioral skills.

OpenAI: Deep AI Fluency + Full‑Stack Ownership

Positioning: OpenAI’s forward‑deployed roles skew toward high‑stakes, high‑impact deployments with customers who are building on top of frontier models. The expectation is that you act as both a product engineer and a field architect.

What they really test for:

LLM depth, not just API usage

Solid understanding of tokenization, context windows, function calling, system prompts, rate limits, and failure modes.

Ability to choose between RAG, fine‑tuning, and prompt‑engineering tradeoffs and justify them with cost/latency/quality reasoning.

Full‑stack + infra skills

Comfortable shipping production systems: backend (Python/TypeScript), basic frontend (React/Next), infra (Docker, K8s, cloud).

Can design data pipelines for logging, analytics, safety signals, and feedback loops.

Customer‑facing ambiguity handling

You walk into “we want AI” conversations and shape them into concrete use cases, milestones, and guardrails.

You’re comfortable saying “no” to unrealistic expectations while offering a simpler path that still delivers value.

How to prepare for OpenAI‑style FDE interviews:

Build and ship at least one real product on OpenAI APIs (not a toy chatbot): for example, a workflow assistant with RAG, role‑based access, analytics dashboard, and guardrails.

Be ready with case studies that show: discovery → design tradeoffs → shipping under constraints → post‑launch iteration.

Practice explaining LLM tradeoffs to a non‑technical VP in simple language.

Current FDE open roles at OpenAI

Anthropic: Safety‑First Applied AI + Long‑Term Customer Stewardship

Positioning: Anthropic’s forward‑deployed engineers lean heavily into applied AI with a safety and reliability lens. You’re expected to think about not just “does it work?” but “does it behave safely and predictably under pressure?”

What they really test for:

Applied AI engineering with a safety mindset

Familiarity with Claude‑style models, safety policies, red‑teaming concepts, and failure‑mode thinking.

Ability to design flows that mitigate prompt injection, abuse, and misuse scenarios.

Structured reasoning & evaluation

Designing evaluation harnesses, test datasets, and metrics for “quality” beyond simple accuracy: helpfulness, honesty, harmlessness.

Iterating on prompts and policies in a structured, data‑driven way.

High‑trust, long‑term client relationships

You’re more steward than “hit‑and‑run” implementer; you help customers evolve their AI maturity over months, not just ship a single feature.

Strong written communication: architecture docs, safety considerations, decision logs.

How to prepare for Anthropic‑style FDE interviews:

Build at least one “safety‑aware” AI feature: think moderation, red‑teaming tools, or evaluation dashboards that track failure modes, not just latency.

Prepare stories where you caught risks early (data misuse, policy issues, security gaps) and navigated them with stakeholders.

Practice walking through how you’d design an AI system for a regulated domain (finance, healthcare, gov) and what safety levers you’d include.

Current FDE open roles at Anthropic

NVIDIA: Systems‑Level Performance + AI Infrastructure

Positioning: NVIDIA’s forward‑deployed/architect roles are closest to AI infrastructure and performance engineering. Instead of “which prompt?” the conversation is often “which GPU, which optimization, which deployment pattern?”

What they really test for:

Strong systems & performance orientation

Comfortable reasoning about GPU utilization, batching, concurrency, throughput, and cost per token or per request.

Understanding of how model choices, quantization, and deployment frameworks impact latency and cost.

Platform and ecosystem fluency

Familiarity with NVIDIA’s AI stack (CUDA basics, Triton, TensorRT, NIMs, etc.) is a strong plus.

Bonus points for comfort with multi‑cloud deployments and hybrid/on‑prem setups.

Architect‑level customer guidance

You can sit with a customer’s infra team and co‑design reference architectures that fit their budget, latency needs, and compliance constraints.

You’re credible in conversations with both CTOs and senior infra engineers.

How to prepare for NVIDIA‑style FDE/architect interviews:

Take an existing LLM application and run simple performance experiments: batching, caching, model size swaps; document how these affect latency/cost.

Learn at least one NVIDIA‑branded component end‑to‑end (e.g., deploy a small model using Triton or a NIM‑style setup) and be able to explain the architecture.

Prepare a “customer workshop” style narrative: how you’d guide a bank or telco from “we want AI” to a GPU‑backed architecture.

Current FDE open roles at NVIDIA

Databricks: Data + GenAI + Enterprise Platforms

Positioning: Databricks’ AI Engineer / FDE roles sit at the intersection of data platforms and generative AI. Think “build AI on top of the lakehouse and ship it into customer workloads.”

What they really test for:

Data engineering + GenAI hybrid

Strong skills in Spark, SQL, and data modeling, combined with hands‑on experience building RAG/LLM apps.

Ability to design retrieval over large enterprise datasets, not just a handful of PDFs.

Platform thinking

You don’t just hack a one‑off script; you build reusable patterns that can be productized or repeated across customers.

You understand how feature stores, governance, and lineage matter for enterprise AI.

Field engineering & enablement

You can work side‑by‑side with a customer’s data team, co‑building notebooks, pipelines, and MLflow/observability setups.

Strong skills in making complex architectures understandable through diagrams, demos, and “day‑2 operations” docs.

How to prepare for Databricks‑style FDE interviews:

Build a full RAG system backed by a real data lake: ingest docs, create embeddings, store in a vector DB, expose as an API, and add monitoring.

Practice telling the story of a data‑intensive project: ingestion → transformation → governance → AI feature → monitoring.

Be ready to whiteboard how you’d integrate Databricks with a customer’s existing warehouse, BI tools, and downstream apps.

Current FDE open roles at Databricks

https://www.databricks.com/company/careers/open-positions?department=Professional%20Services&location=all (search for FDE)

Salesforce: AI Agents, Business Workflows, and Enterprise “Last Mile”

Positioning: Salesforce’s AI FDE roles are deeply tied to AI agents and business workflows inside the Salesforce ecosystem. The emphasis is on customer outcomes, not just model sophistication.

What they really test for:

Workflow‑centric AI design

You can map sales, service, or marketing workflows and decide where AI agents add value: drafting emails, suggesting next best actions, summarizing cases, etc.

You understand how to chain tools, data sources, and prompts into reliable “agentic” flows.

Ecosystem fluency

Enough understanding of Salesforce platform primitives (objects, flows, Apex, integration patterns) to wire AI into real orgs.

Comfortable mixing declarative tools with code and external AI services.

Elite customer‑facing skills

You spend a lot of time with business stakeholders: directors of sales, heads of service, program managers.

You can peel back the layers of “we want an AI bot” into root business problems and define measurable outcomes.

How to prepare for Salesforce‑style FDE interviews:

Build an “agentic” AI prototype around a business workflow: e.g., an AI that triages support tickets and drafts responses while logging everything into a CRM.

Practice explaining AI capabilities in pure business language: pipeline impact, CSAT, handle time, revenue lift.

Prepare examples where you shaped requirements, pushed back on purely “flashy demo” ideas, and delivered something that actually moved metrics.

Current FDE open roles at Salesforce

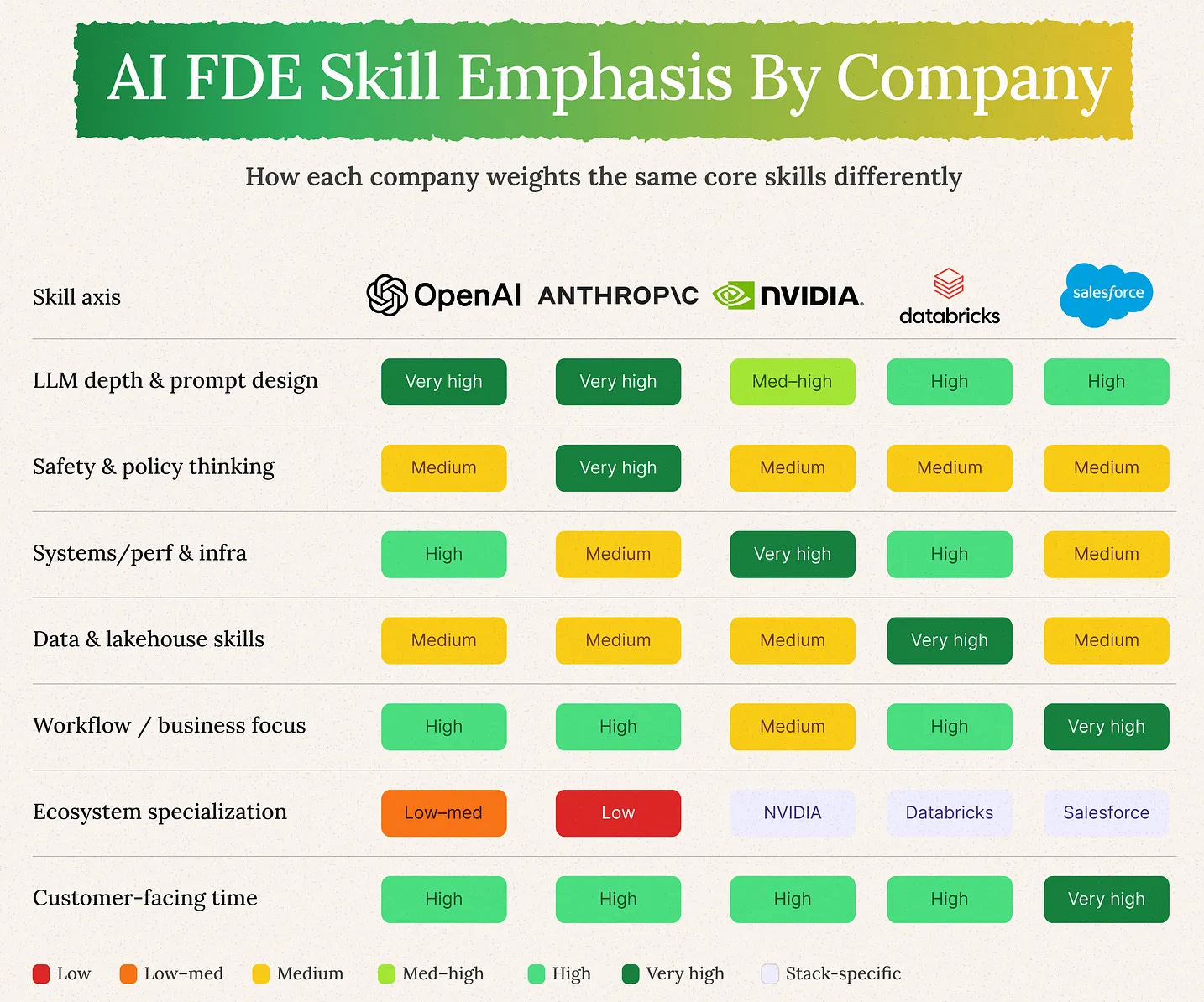

Skill Map: How the Five Companies Differ

Here’s a simple way to visualize the emphasis areas. All five require all of these skills, but the “dial” is turned up differently.

Use this to target your preparation. You don’t need to be equally strong on everything to start; you need one or two companies where your natural strengths line up with their focus areas.

A 6–8 Week Targeted Plan for These Five Companies

If my earlier article was about “closing the general AI FDE gap,” this is the company‑specific version.

Weeks 1–2: Core LLM + One Deep Project

Revisit LLM fundamentals and RAG.

Build one serious end‑to‑end app and treat it as your “OpenAI/Anthropic case study”:

Backend API, basic frontend, RAG, observability, cost tracking, and a simple safety policy.

Weeks 3–4: Pick a “Platform Anchor”

Choose one based on your target:

OpenAI: Build an end‑to‑end app on OpenAI APIs: for example, a customer‑support assistant with RAG over real docs, role‑based access, analytics dashboard, and basic safety/guardrail checks.

Anthropic: Build a Claude‑based internal assistant (e.g., policy QA or contract reviewer) with an evaluation harness that tracks quality, safety failures, and red‑team prompts.

NVIDIA: do a small performance‑oriented deployment, experiment with latency and throughput, document tradeoffs.

Databricks: build a RAG system on top of a small lakehouse‑style dataset.

Salesforce: build a workflow‑centric agent that automates part of a sales or support process (with a mock CRM if needed).

Weeks 5–6: Storytelling and Behavioral Prep

Turn your projects into 3–4 crisp case studies: problem → constraints → decisions → results → lessons.

Practice behavioral stories that highlight: discovery, production debugging, stakeholder management, expectation management.

Tailor your resume bullets and LinkedIn to explicitly mention “AI Forward Deployed Engineer–style work” and name these companies as your target pattern.

If you already have SA/SWE experience, this plan is less about learning everything from scratch and more about relabeling and aiming the skills you already use daily.

The Bottom Line

AI FDE roles at OpenAI, Anthropic, NVIDIA, Databricks, and Salesforce are deep enough in AI and engineering to ship, and deep enough in customer reality to matter.

If you’re a seasoned SA or SWE, you’re already 60–70% of the way there. The remaining 30–40% is:

Gaining AI fluency

Picking 1–2 ecosystems to anchor on them (the focus of each of them is bit different)

Learning to talk about your work through the lens of “forward‑deployed impact,” not just “feature delivery.”

Enjoyed reading the article? You might also enjoy the most popular posts from Big Tech Careers:

You’re Doing STAR Format Answers Wrong. Here’s How to Do It the Right Way

The One-Minute Elevator Pitch That Will Transform Your Interview Introduction

The Complete Blueprint for Using AI in Your Interview Process

Also, connect with me on LinkedIn to excel in your cloud and AI career.

Big Tech Interview Preparation Course

While I share everything for free here in this newsletter, if you need all the material in a structured course format with a deep dive into preparation methodology for next behavioral interviews, I have a self-paced course for you.

Here is what is covered in the 3+ hour course:

Check the course page for more details.

Essentially, take the Palantir approach

Thank you for the detailed post . I see some FDE data engineer roles in US . How would they be different from regular data engineer roles ? Any insights?